Continuous glucose monitors have transformed clinical research from single-point blood draws into continuous metabolic surveillance. As of 2025, more than 4,200 active clinical trials listed on ClinicalTrials.gov include CGM as a primary or secondary outcome measure—a 340 percent increase from 2019. The device that patients wear on their arms is the same technology generating evidence that shapes FDA approvals, treatment guidelines, and the future of metabolic medicine.

Why Researchers Switched from A1C to CGM Endpoints

For 30 years, A1C (glycated hemoglobin) was the gold-standard endpoint in diabetes clinical trials. It remains important, but its limitations have driven researchers toward CGM-based metrics:

**A1C is a 3-month average that conceals daily variation.** Two patients can have identical A1C values of 7.0 percent—one with stable glucose between 120-160 mg/dL and another oscillating between 50 and 300 mg/dL. Their glycemic experience is fundamentally different, but A1C cannot distinguish between them.

**A1C does not capture hypoglycemia.** Severe low blood sugar events are the most dangerous acute complication of insulin therapy, yet A1C reflects only the average, not the lows. A patient who frequently dips below 54 mg/dL but also spends time above 200 mg/dL may have a "good" A1C of 6.5 percent while experiencing dangerous glycemic instability.

**A1C has biological confounders.** Conditions affecting red blood cell lifespan—iron deficiency anemia, hemoglobinopathies, chronic kidney disease—alter A1C values independent of actual glucose levels. CGM measures glucose directly, bypassing these confounders.

In 2019, the FDA accepted Time in Range as a valid endpoint for regulatory submissions, signaling that CGM-derived metrics had achieved sufficient scientific credibility to support drug and device approvals.

The Key CGM Metrics in Clinical Research

Time in Range (TIR)

Time in Range measures the percentage of CGM readings within a target glucose range during a defined period (typically 14-90 days). The international consensus ranges, established in 2019 by the Advanced Technologies & Treatments for Diabetes (ATTD) consortium, are:

- **70-180 mg/dL:** Standard range for diabetes (target: >70% of the day) - **70-140 mg/dL:** Tighter range for prediabetes and wellness research - **<70 mg/dL:** Below range / Level 1 hypoglycemia (target: <4%) - **<54 mg/dL:** Significantly below range / Level 2 hypoglycemia (target: <1%) - **>250 mg/dL:** Significantly above range (target: <5%)

A 2019 study in Diabetes Care (Beck et al.) demonstrated that each 5-percentage-point improvement in Time in Range (70-180 mg/dL) corresponded to a 0.5 percent reduction in A1C and a measurable decrease in diabetes complications. This validated TIR as a clinically meaningful surrogate endpoint.

Glucose Management Indicator (GMI)

GMI converts the mean CGM glucose over 14+ days into an estimated A1C equivalent. Clinical trials use GMI to provide continuity with the A1C literature while leveraging CGM's granularity. The formula: GMI (%) = 3.31 + (0.02392 × mean glucose in mg/dL).

Coefficient of Variation (CV)

CV measures glucose variability—the degree of glucose fluctuation independent of the mean. A CV below 36 percent is considered "stable" in diabetes research; below 20 percent is typical for non-diabetic individuals. High CV is an independent risk factor for hypoglycemia and is increasingly included in clinical trial safety analyses.

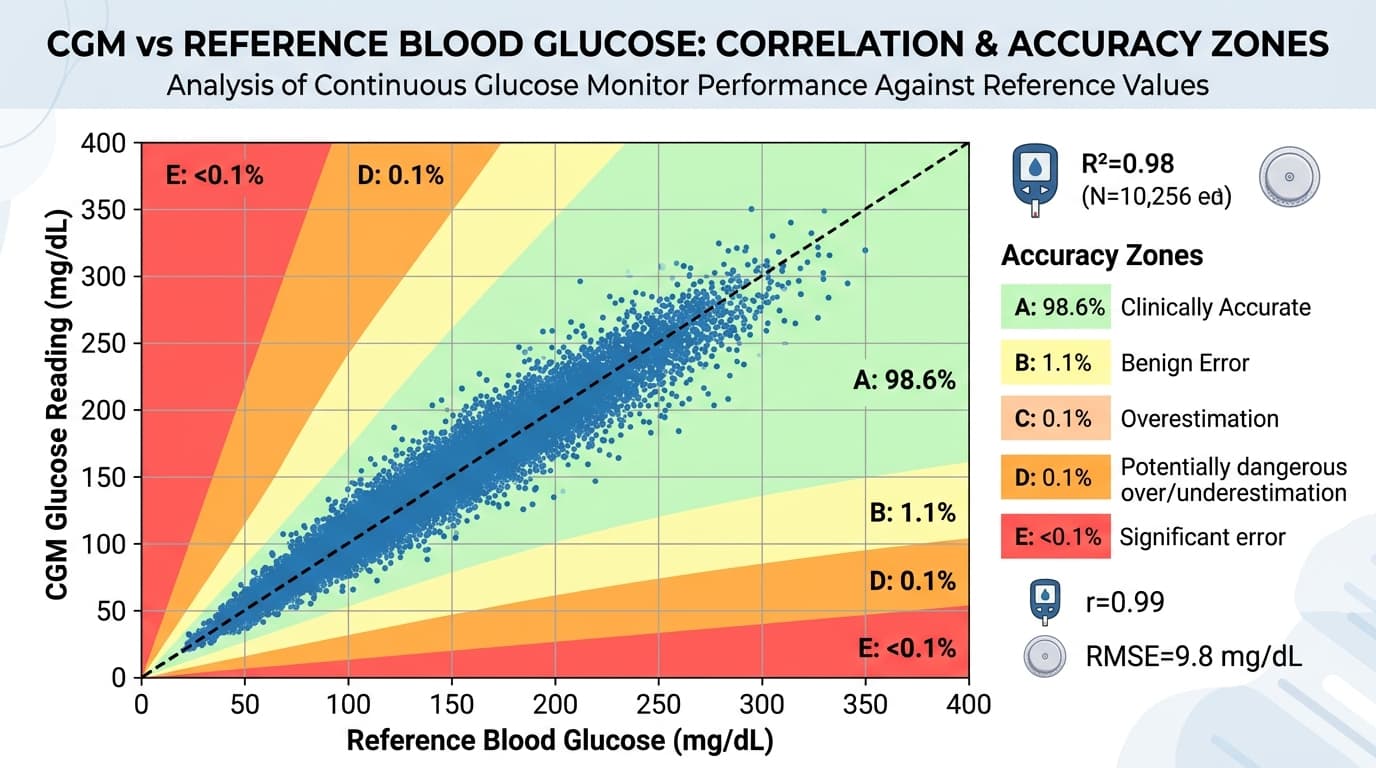

MARD (Mean Absolute Relative Difference)

MARD is the accuracy metric for the CGM device itself, not the patient's glucose control. In device trials (such as those required for FDA clearance), MARD is the primary endpoint. A CGM must demonstrate an overall MARD below 10 percent (and ideally below 9 percent) to be considered clinically accurate. Current devices achieve 7.9-9.0 percent MARD.

How CGMs Are Deployed in Clinical Trials

Blinded vs. Unblinded Wear

In many trials, participants wear "blinded" CGM sensors—the device records data continuously but the screen is covered or the readings are disabled so the participant cannot see their glucose. This eliminates the behavioral confound: if participants can see their glucose, they may change their behavior (eating differently, exercising more), which could obscure the treatment effect being studied.

Unblinded CGM wear is used in trials where real-time glucose visibility is part of the intervention—for example, studies evaluating whether CGM biofeedback improves dietary choices or weight loss outcomes.

Professional CGM vs. Personal CGM

Professional CGMs (like the Abbott Libre Pro and Dexcom G6 Pro) are configured for clinical use. They store data for 10-14 days without displaying readings to the patient, then the data is downloaded at the clinic. Personal CGMs (the consumer devices most people know) stream real-time data to a smartphone app.

Research increasingly uses personal CGMs in ambulatory (free-living) study designs, where participants go about their normal lives while the CGM uploads data to a cloud platform. This approach generates more ecologically valid data than in-clinic protocols, at lower cost and greater scale.

Landmark Trials That Used CGM Data

**DCCT/EDIC Extension Studies:** The foundational diabetes trials have incorporated CGM sub-studies to correlate Time in Range with long-term complication rates (retinopathy, nephropathy, neuropathy). Results published in 2021 confirmed that Time in Range above 70 percent is associated with significantly lower complication risk.

**FLAT-SUGAR Trial (2023):** Compared semaglutide versus empagliflozin for glucose variability reduction using CGM as the primary endpoint. Semaglutide reduced CV from 28 percent to 19 percent versus empagliflozin's reduction to 22 percent, demonstrating CGM's ability to differentiate treatments that A1C alone cannot distinguish.

**PREDICT 1 and 2:** The personalized nutrition studies from King's College London used CGMs in 1,002 and 1,100 participants respectively to map individual glucose responses to standardized meals. The resulting dataset is the largest CGM-based nutrition database in the world and has been used to develop machine learning models for predicting personal food responses.

The Future: Real-World Evidence from Consumer CGMs

The line between clinical research and consumer health is blurring. Companies like Dexcom, Abbott, and Levels are partnering with academic researchers to analyze anonymized CGM data from tens of thousands of consumer device users—creating real-world evidence datasets that dwarf traditional clinical trials.

A 2024 study in Nature Digital Medicine analyzed CGM data from 50,000 Levels users and identified population-level patterns in meal timing, glucose variability, and exercise responses that no single clinical trial could capture. This "research at scale" model promises to accelerate the development of personalized nutrition algorithms, refine clinical guidelines, and expand CGM evidence beyond diabetes into obesity, cardiovascular disease, and metabolic wellness.

For consumers, this means that every sensor you wear contributes—when you opt into data sharing—to a growing body of evidence that will improve CGM products and metabolic health recommendations for millions of people. To understand the accuracy specifications that make this research possible, our MARD explainer covers how CGM accuracy is measured and compared across devices.