When evaluating a continuous glucose monitor, accuracy is the single most important specification—and the metric the industry uses to measure it is called MARD: Mean Absolute Relative Difference. Every CGM manufacturer reports MARD in their clinical data, and understanding this number is essential for making an informed purchasing decision.

What MARD Is

MARD quantifies the average difference between a CGM reading and a simultaneous laboratory blood glucose reference measurement. It is expressed as a percentage. A MARD of 9 percent means that, on average, the CGM reading deviates from the true blood glucose value by 9 percent.

In practical terms: if your actual blood glucose is 100 mg/dL and the CGM has a 9 percent MARD, the CGM reading will typically fall somewhere between 91 and 109 mg/dL.

How MARD Is Calculated

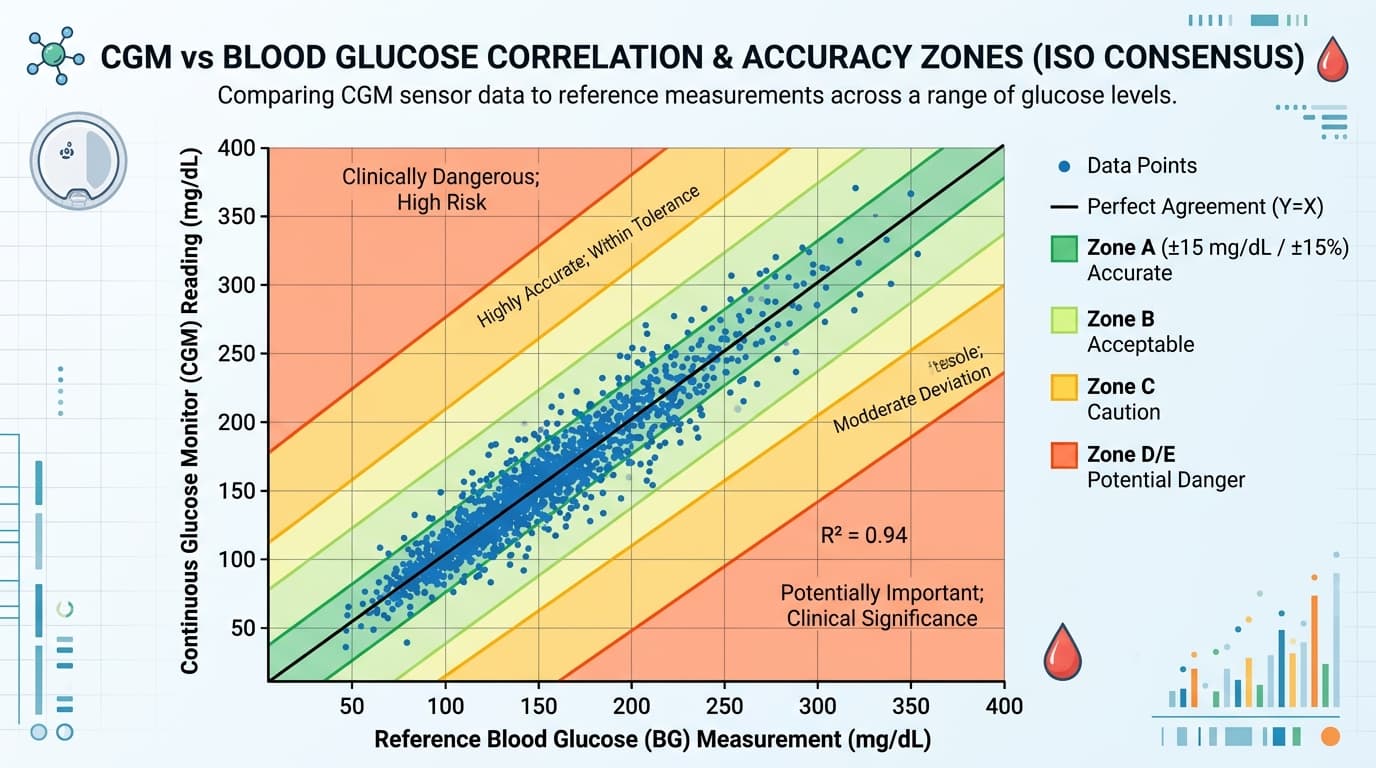

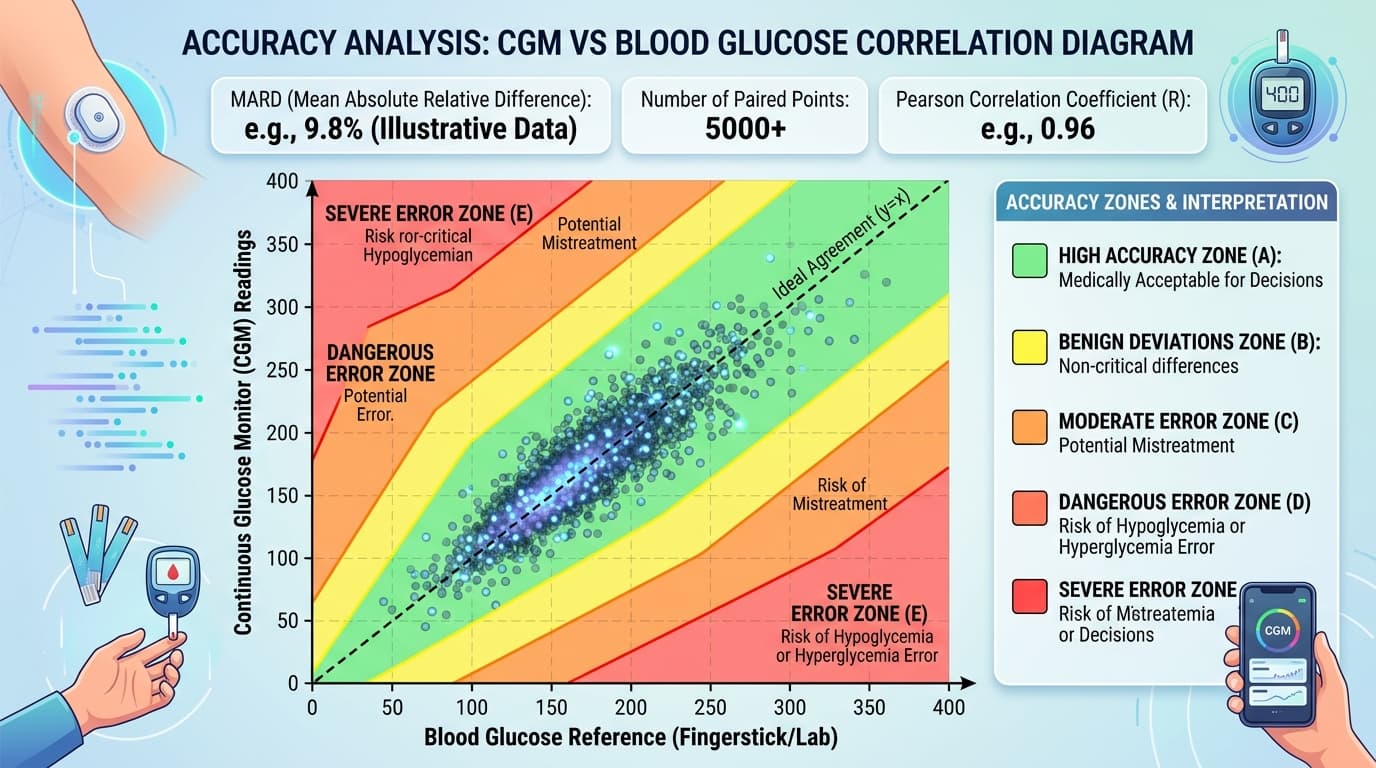

In a clinical accuracy study, participants wear the CGM sensor while periodically providing venous blood samples or fingerstick measurements analyzed by a laboratory-grade glucose analyzer (typically a YSI analyzer). Each CGM reading is paired with the nearest reference measurement, and the absolute difference is calculated:

**Absolute Relative Difference = |CGM value − Reference value| ÷ Reference value × 100**

MARD is the arithmetic mean of all individual absolute relative differences across all participants and all measurement pairs in the study. A typical CGM accuracy study involves 100-400 participants and generates 10,000-50,000 paired data points.

What the Numbers Mean

Lower MARD values indicate better accuracy. Here is how current CGM devices compare:

- **Dexcom G7 / G7 15-Day:** 8.2% overall MARD - **Abbott FreeStyle Libre 3 Plus:** 7.9% overall MARD - **Abbott FreeStyle Libre 3:** 7.9% overall MARD - **Medtronic Guardian 4:** 8.7% overall MARD - **Eversense E3:** 8.5% overall MARD (after day 1) - **Dexcom Stelo (OTC):** 9.0% overall MARD

For context, the fingerstick blood glucose meters that most people with diabetes use at home have an accuracy requirement of ±15 percent (per FDA standards)—significantly less precise than modern CGMs. Ten years ago, CGM MARD values were in the 12-15 percent range. The improvement to below 9 percent represents a generational leap in sensor technology.

Factors That Affect Accuracy

MARD is an average, which means individual readings can deviate more or less than the stated value. Several factors influence real-world accuracy:

**Day 1 warmup:** Most CGM sensors are least accurate during the first 12-24 hours after insertion, while the sensor equilibrates with the interstitial fluid. The Dexcom G7 has a 30-minute warmup period; the Libre 3 requires 60 minutes. MARD is typically 2-3 percentage points higher on day 1 compared to subsequent days.

**Glucose rate of change:** When blood glucose is rising or falling rapidly (more than 2 mg/dL per minute), the interstitial fluid glucose lags further behind blood glucose. CGM accuracy degrades during rapid changes—this is particularly relevant after high-carbohydrate meals or during vigorous exercise.

**Hypoglycemic range:** CGMs are generally less accurate at low glucose levels (below 70 mg/dL). MARD in the hypoglycemic range can be 3-5 percentage points worse than overall MARD. This is a critical consideration for insulin users who rely on CGM alerts to prevent dangerous lows.

**Compression events:** Sleeping on top of the sensor compresses the tissue around the filament, reducing blood flow and causing artificially low readings. These "compression lows" can last 20-60 minutes and are one of the most common sources of false low-glucose alerts.

**Sensor site:** Most CGMs are approved for the upper arm (Libre) or abdomen (Dexcom, Medtronic). Using a sensor on an unapproved body site may alter accuracy due to differences in interstitial fluid dynamics.

The Lag Time Factor

Interstitial glucose lags blood glucose by 5-15 minutes under normal conditions. This lag is independent of MARD and means that even a perfectly accurate CGM will show a value from several minutes ago. When glucose is stable, the lag is irrelevant. When glucose is changing rapidly—during a post-meal spike or insulin correction—the lag can make the CGM reading appear misleadingly high or low.

Modern CGM algorithms attempt to compensate for this lag using predictive filtering, but they cannot eliminate it entirely. Understanding lag time is essential for making insulin dosing decisions and interpreting post-meal glucose peaks.

The Bottom Line

A CGM with a MARD below 10 percent is considered clinically accurate for both diabetes management and wellness monitoring. All major CGM devices on the U.S. market in 2026 meet this threshold. When comparing devices, a 1-2 percentage point difference in MARD matters less than practical factors like wear comfort, sensor life, app quality, and insurance coverage. Accuracy is table stakes—the real differentiation happens in the user experience.